The Company

Micron Technology (NASDAQ: MU) is one of the world’s largest manufacturers of semiconductor memory and storage products, primarily DRAM, NAND flash, and high-bandwidth memory (HBM). These chips are essential components in AI servers, data centers, PCs, smartphones, and automotive systems.

Micron operates an integrated device manufacturer (IDM) model, meaning it designs, manufactures, and sells its own memory chips, unlike many semiconductor companies that outsource production.

Memory is one of the most cyclical segments of semiconductors, but the rise of AI infrastructure and high-bandwidth memory (HBM) has significantly strengthened Micron’s long-term demand outlook.

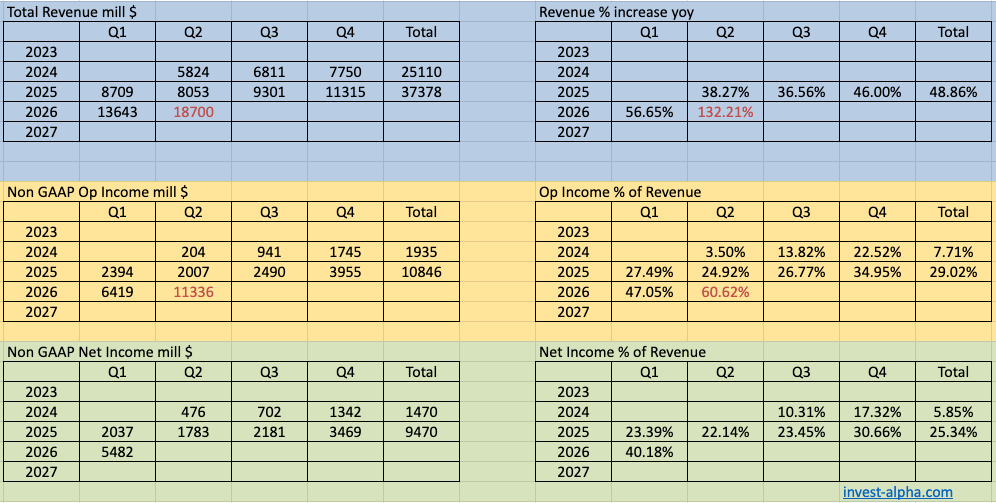

Micron Financials

Recent trend

The memory industry went through a severe downturn in 2023, driven by excess inventory and weak PC/smartphone demand.

Recovery began in 2024 driven by AI data center demand, particularly HBM used in Nvidia AI GPUs.

Key structural shift:

AI servers require dramatically more memory than traditional servers.

Example:

| System | DRAM per server |

|---|---|

| Traditional server | ~256-512 GB |

| AI training server | 1–2 TB+ |

Bull Case for Micron

1. AI Memory Demand Explosion

AI clusters require massive memory bandwidth. Memory is needed to enable longer context windows, deeper reasoning chains, and multi-agent orchestration.

Specifically HBM demand is expected to grow 50-70% annually.

Example Nvidia GPU systems:

| GPU | Memory Type |

|---|---|

| H100 | HBM3 |

| B100 / Blackwell | HBM3E |

| Vera Rubin | HBM4 |

Each GPU requires 8-12 stacks of HBM, dramatically increasing DRAM demand.

2. Structural Memory Supply Discipline

Memory historically suffered from oversupply, but now:

Only three global DRAM manufacturers exist

- Samsung

- SK Hynix

- Micron

This oligopoly improves pricing discipline.

3. HBM Margin Expansion

HBM is much higher margin than commodity DRAM.

Estimated pricing:

| Memory Type | Relative Margin |

|---|---|

| Commodity DRAM | Low |

| HBM | Very high |

| LPDDR | Medium |

Micron’s HBM3E ramp is expected to drive record margins in AI data centers.

4. AI Server Memory Content Growth

Memory per server is rising dramatically:

| Server Type | DRAM Content |

|---|---|

| Traditional enterprise | ~$300 |

| AI server | $3000+ |

Memory content per AI server may increase 10×.

Bear Case for Micron

1. Memory is a Commodity

Unlike GPUs or CPUs, memory chips are largely interchangeable.

Customers can switch between:

- Samsung

- SK Hynix

- Micron

This creates severe pricing cycles.

2. Extreme Cyclicality

Memory markets historically follow boom-bust cycles.

Example:

| Year | Market |

|---|---|

| 2018 | Boom |

| 2019 | Crash |

| 2021 | Boom |

| 2023 | Crash |

Micron earnings can swing from $10B profit to multi-billion loss within 18 months.

3. Samsung Competitive Risk

Samsung remains the largest memory manufacturer globally.

If Samsung aggressively increases production, it could trigger another price collapse.

4. HBM Market Share

Currently SK Hynix leads HBM due to early Nvidia partnerships.

If Micron fails to gain share, it could miss the most profitable segment.

Management Outlook

- We expect server units to grow in the low-teens percentage range in calendar 2026, driven by growth in both AI and traditional servers.(Q2 2026)

- Begun volume shipment of its HBM4 36GB 12-Hi in 2026 and is designed for NVIDIA Vera Rubin. (Q2 2026)

- We forecast an HBM TAM CAGR of approximately 40% through calendar 2028, from approximately $35 billion in 2025 to around $100 billion in 2028.

- We anticipate substantial new records in revenue, gross margin, EPS, and free cash flow for both the second quarter and the full fiscal year 2026, and we expect our business performance to continue to strengthen through the year.

- we will be beginning to ramp production of HBM4 in CQ2 time frame in line with our customer demand. And, of course, our HBM4 is progressing extremely well. Very pleased with our product, industry-leading product with the highest performance of over 11 gigabits per second. And so, I mean, that is the highest performance. And we are very pleased with its overall yield ramp, and we expect that HBM4 to be expecting having a faster yield ramp than our HBM3E.

Micron management highlighted three major themes.

1. AI is transforming the memory market

Management expects AI servers to drive the majority of DRAM growth over the next few years.

HBM revenue is expected to grow from near zero to several billions annually.

2. HBM supply already sold out

Micron has stated that:

HBM production is sold out for the next year or more.

This indicates extremely strong demand from AI GPU makers.

3. Industry pricing improving

Management said both:

- DRAM prices rising

- NAND prices recovering

This suggests the memory cycle has moved into early recovery phase.

Total Addressable Market (TAM) and Industry Growth

The memory market includes DRAM and NAND flash.

| Market | TAM (2024) | Expected CAGR |

|---|---|---|

| DRAM | ~$120B | ~15-18% |

| NAND Flash | ~$70B | ~10-12% |

| Total Memory Market | ~$190B | ~13-15% |

AI is expected to dramatically increase DRAM demand over the next decade.

HBM alone could become a $30-40B market by 2030.

Micron Products

| Product Category | Description | % of Revenue (Approx) |

|---|---|---|

| DRAM | Main memory used in servers, PCs, and smartphones Next gen tech called 1γ DRAM. | ~70% |

| NAND Flash | Storage memory used in SSDs and mobile devices . Next gen tech called G9 NAND. | ~25% |

| HBM (High Bandwidth Memory) | High-performance memory used in AI GPUs | ~3-5% (rapidly growing) |

| Other Memory Solutions | Automotive, embedded memory, managed NAND | ~5% |

Key growth driver: HBM and data center DRAM.

Business Model

Micron follows an integrated semiconductor manufacturing model.

Step 1: Design

Micron designs memory architectures including:

- DRAM nodes

- NAND layers

- HBM stacks

Step 2: Manufacturing

Micron manufactures chips in its own fabs located in:

- United States

- Taiwan

- Japan

- Singapore

Fabrication requires extremely expensive capital investments.

Example:

Advanced memory fabs cost $15B+ each.

Step 3: Packaging

HBM chips are stacked and packaged using advanced 3D packaging techniques.

These stacks are then integrated with GPUs.

Step 4: Distribution

Micron sells to:

- Hyperscalers

- PC manufacturers

- Smartphone OEMs

- Automotive suppliers

Revenue is driven heavily by contract pricing cycles.

Major Customers

Micron sells memory to nearly every major technology manufacturer.

| Customer Type | Examples |

|---|---|

| AI GPU companies | Nvidia, AMD |

| Hyperscalers | Amazon AWS, Microsoft Azure, Google |

| PC manufacturers | Dell, HP, Lenovo |

| Smartphone companies | Apple, Samsung, Xiaomi |

| Automotive OEM suppliers | Bosch, Continental |

AI infrastructure customers are becoming Micron’s fastest growing segment.

Key Competitors

Micron operates in an extremely concentrated market.

| Competitor | Key Products |

|---|---|

| Samsung Electronics | DRAM, NAND, HBM |

| SK Hynix | DRAM, NAND, HBM |

| Kioxia | NAND Flash |

SK Hynix currently leads in HBM market share, particularly supplying Nvidia.

Founding History

Micron Technology was founded in 1978 in Boise, Idaho by:

- Ward Parkinson

- Joe Parkinson

- Dennis Wilson

- Doug Pitman

The company originally focused on memory chip design and testing.

Major turning points included:

1980s

Micron began producing DRAM chips, entering the global memory market.

1990s

The company expanded through acquisitions and technology development.

2000s

Micron became one of the three remaining global DRAM manufacturers.

2013

Micron acquired Elpida Memory, a bankrupt Japanese DRAM maker, significantly expanding its DRAM capacity.

Today Micron is one of the largest memory companies in the world, with headquarters still in Boise, Idaho.